|

|

马上注册,结交更多好友,享用更多功能^_^

您需要 登录 才可以下载或查看,没有账号?立即注册

x

爬虫煎蛋图片代码如下:

- import urllib.request

- import os

- def url_open(url):

-

- req = urllib.request.Request(url)

- req.add_header('User-Agent','Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/60.0.3112.90 Safari/537.36')

- response = urllib.request.urlopen(url)

- html = response.read()

-

- return html

- def get_page(url):

-

- html = url_open(url).decode('utf-8')

- a = html.find('current-comment-page') + 23

- b = html.find(']',a)

-

- return html[a:b]

- def find_imgs(url):

- html = url_open(url).decode('utf-8')

- img_addrs = []

- a = html.find('img style="max-height: 750px; max-width: 480px;" src='[color=Red])#检查元素后,得到的src在style后,这个如何处理?

- [/color]

- while a != -1:

- b = html.find('.jpg',a,a+255)

- if b != -1:

- img_addrs.append(html[a+53:b+4])

- else:

- b = a + 53

-

- a = html.find('img style="max-height: 750px; max-width: 480px;" src=',b)

-

- return img_addrs

- def save_imgs(folder,img_addrs):

- for each in img_addrs:

- filename = each.split('/')[-1]

- with open(filename,'wb') as f:

- img = open_url(each)

- f.write(img)

- def download_mm(folder = 'mm',pages = 5):

- os.mkdir(folder)

- os.chdir(folder)

- url = "http://jandan.net/ooxx/"

- page_num = int(get_page(url))

- for i in range(pages):

- page_num -= i

- page_url = url + 'page- ' + str(page_num) + '#comments'

- img_addrs = find_imgs(page_url)

- save_imgs(folder,img_addrs)

- if __name__ == '__main__':

- download_mm()

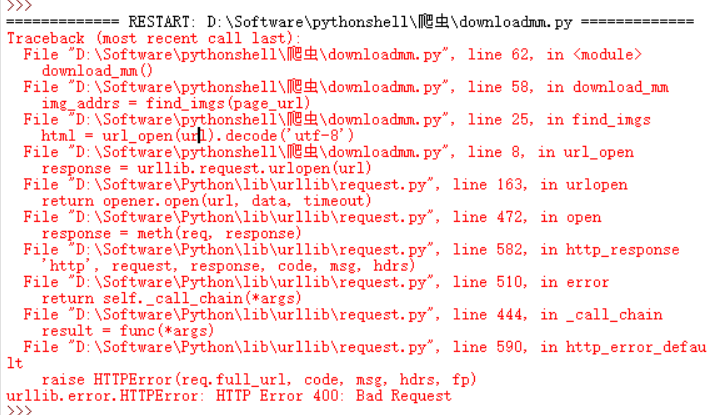

运行后报出来的错误如下:

报错信息

求指导,这是啥问题。谢谢啦 |

|

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)