|

|

马上注册,结交更多好友,享用更多功能^_^

您需要 登录 才可以下载或查看,没有账号?立即注册

x

本帖最后由 wongyusing 于 2018-12-11 13:19 编辑

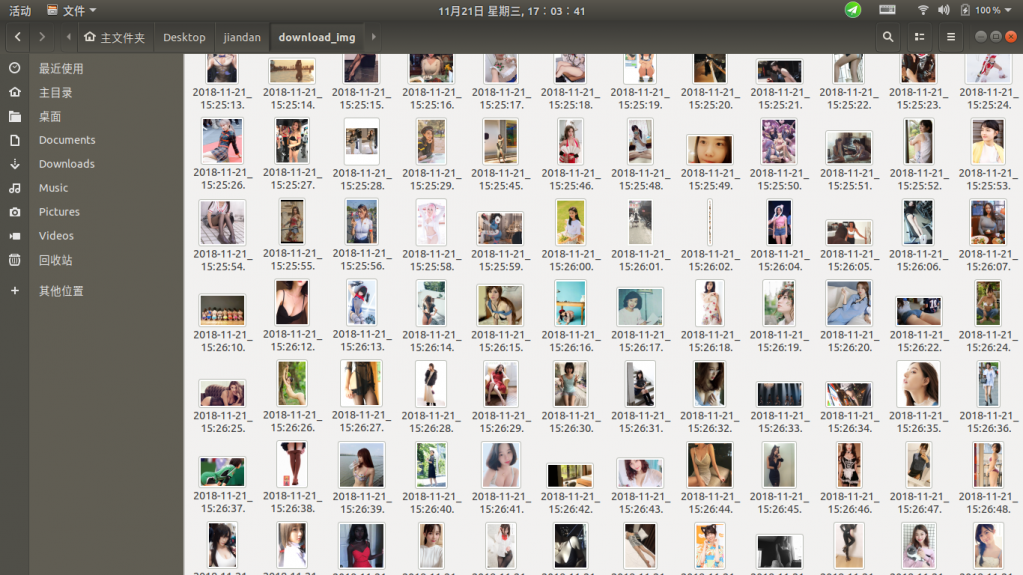

效果如图:

注意:需要python3和node.js环境,windows上未测试过。

仅在ubuntu上运行成功。

原理和思路写好了,但是尚未整理。就不发出来了。

暂时只有代码。思路以后有空了再整理

一定要配置好node.js和python3的环境变量后方可使用。

无聊写了条爬虫

https://fishc.com.cn/forum.php?m ... peid%26typeid%3D729

无聊又写了条爬虫

https://fishc.com.cn/forum.php?m ... peid%26typeid%3D729

无聊又双写了条爬虫

https://fishc.com.cn/forum.php?m ... peid%26typeid%3D729

requests和node.js爬取煎蛋网

改一下里面的jiandan.py

如下:

- import requests

- import os

- import time

- from bs4 import BeautifulSoup as bs

- # 打开网页函数

- def get_response(url):

- headers = {

- 'User-Agent': "Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/62.0.3202.75 Safari/537.36"}

- response = requests.get(url, headers) # 加上浏览器头,以防被禁

- response.encoding = 'utf-8' # 指定编码格式

- #response.encoding = 'gbk' # 指定编码格式

- return response

- # 写一个js代码

- def writeFile(content):

- with open('js/cest.js','w',encoding='utf-8')as txt_file:

- txt_file.write("var JianDan = require('./main');\n")

- txt_file.write(f'var e = "{content}";\n')

- txt_file.write('hello = new JianDan(e);\n')

- txt_file.close

- # 获取并下载图片

- def get_img():

- # 运行js代码

- url = 'http:' + os.popen(cmd="node js/cest.js").read()[:-1]

- headers = {

- 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8',

- 'Accept-Encoding': 'gzip, deflate',

- 'Accept-Language': 'zh-CN,zh;q=0.9',

- 'Cache-Control': 'no-cache',

- 'Connection': 'keep-alive',

- 'Pragma': 'no-cache',

- 'Upgrade-Insecure-Requests': '1',

- 'User-Agent': 'Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.77 Safari/537.36',

- }

- response = requests.get(url=url,headers=headers)

- suffix = url[-4:] # 改这里

- file_time = time.strftime("%Y-%m-%d_%H:%M:%S", time.localtime())

- print(suffix)

- try:

- os.mkdir(f'download_img')

- except Exception as e:

- pass

- path = f'download_img/{file_time}{suffix}'

- print(path)

- f = open(path, 'wb')

- f.write(response.content)

- f.close()

- # 主要函数

- def main():

- url = 'http://jandan.net/ooxx'

- response = get_response(url)

- soup = bs(response.text,'lxml')

- # 获取最大页码数

- max_pages = int(soup.select('.cp-pagenavi .current-comment-page')[0].text.replace('[','').replace(']',''))+1

- for i in range(1,max_pages):

- url = f'http://jandan.net/ooxx/page-{i}'

- response = get_response(url)

- soup = bs(response.text,'lxml')

- # 获取密文

- print(f'>>>>>>>>>>>>>>>>>>>>>>当前第{i}页')

- for i in soup.select('.commentlist .img-hash'):

- # 写js代码

- writeFile(i.text)

- # 获取真实链接

- get_img()

- if __name__ == '__main__':

- main()

|

评分

-

查看全部评分

|

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)