|

|

10鱼币

- import requests

- import bs4

- import re

- def open_url(url):

- #使用代理

- # proxies = {'https':'223.243.174.152:9999','https':'223.214.205.179:9999'}

- headers = {'user-agent':'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/99.0.4844.74 Safari/537.36 Edg/99.0.1150.46'}

- res = requests.get(url,headers=headers)

- return res

- def find_movies(res):

- #炖汤

- soup = bs4.BeautifulSoup(res.text,'html.parser')

- #电影名

- movies = []

- targets = soup.find_all('div',class_ = 'hd')

- for each in targets:

- movies.append(each.a.span.text)

- #评分

- ranks = []

- targets = soup.find_all('span',class_='rating_num')

- for each in targets:

- ranks.append('评分%s'%each.text)

- #资料

- messages = []

- targets = soup.find_all('div',class_='bd')

- for each in targets:

- try:

- messages.append(each.p.text.split('\n')[0].strip() + each.p.text.split('\n')[1].strip())

- except:

- continue

- result = []

- length = len(movies)

- for i in range(length):

- result.append(movies[i] + ranks[i] + messages[i] +'\n')

- return result

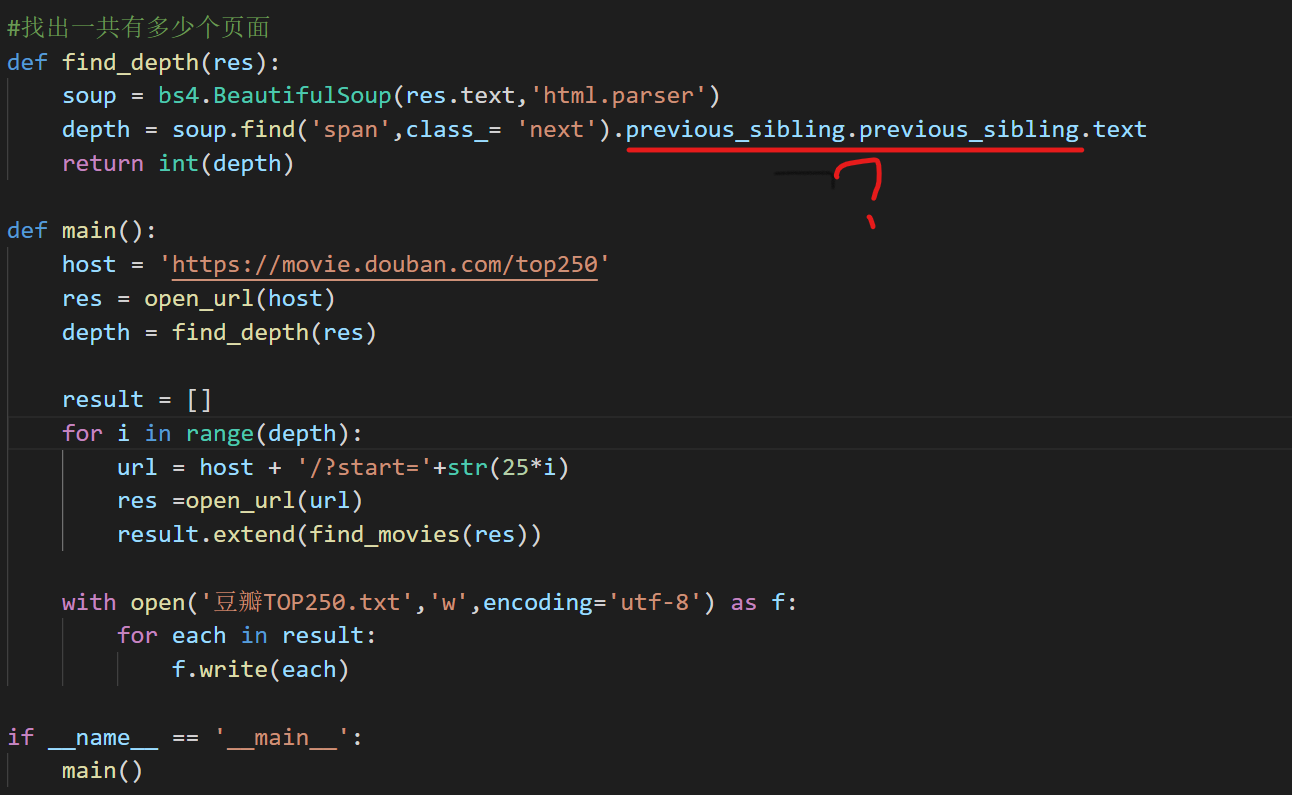

- #找出一共有多少个页面

- def find_depth(res):

- soup = bs4.BeautifulSoup(res.text,'html.parser')

- depth = soup.find('span',class_= 'next').previous_sibling.previous_sibling.text #这一行什么意思呀?

- return int(depth)

- def main():

- host = 'https://movie.douban.com/top250'

- res = open_url(host)

- depth = find_depth(res)

- result = []

- for i in range(depth):

- url = host + '/?start='+str(25*i)

- res =open_url(url)

- result.extend(find_movies(res))

- with open('豆瓣TOP250.txt','w',encoding='utf-8') as f:

- for each in result:

- f.write(each)

- if __name__ == '__main__':

- main()

|

|

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)