|

|

马上注册,结交更多好友,享用更多功能^_^

您需要 登录 才可以下载或查看,没有账号?立即注册

x

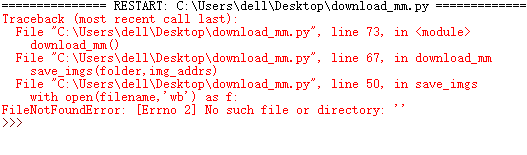

按照教程来的,求大佬帮忙。

import urllib.request

import os

def url_open(url):

req = urllib.request.Request(url)

req.add_header('User-Agent',"Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/49.0.2623.221 Safari/537.36 SE 2.X MetaSr 1.0")

response = urllib.request.urlopen(req)

html = response.read()

#print(url)

return html

def get_page(url):

html = url_open(url).decode('utf-8')

a = html.find('current-comment-page') + len('current-comment-page">[')

b = html.find(']',a)

return html[a:b]

def find_imgs(url):

html = url_open(url).decode('utf-8')

img_addrs = []

a = html.find('img src=')

while a != -1:

b = html.find('.jpg',a,a+255)

if b != 1:

img_addrs.append(html[a+9:b+4])

else:

b = a + 9

a = html.find('img src=',b)

return img_addrs

def save_imgs(folder,img_addrs):

for each in img_addrs:

filename = each.split('/')[-1]

with open(filename,'wb') as f:

img = url_open(each)

f.write(img)

def download_mm(folder='OOXX',pages=10):

os.mkdir(folder)

os.chdir(folder)

url = 'http://jandan.net/ooxx/'

page_num = int(get_page(url))

for i in range(pages):

page_num -= i

page_url = url + 'page-' + str(page_num) + '#comments'

img_addrs = find_imgs(page_url)

save_imgs(folder,img_addrs)

if __name__ == '__main__':

download_mm()

这个我以前也碰到过,因为小甲鱼出的视频和现在比比较早了,当时我记得有鱼油说是煎蛋网的图片已经经过了加密,所以爬不了了

这个是我的错误反馈:

Traceback (most recent call last):

File "F:\python保存\ooxx.py", line 61, in <module>

download_mm()

File "F:\python保存\ooxx.py", line 52, in download_mm

page_num = int(get_page(url))

ValueError: invalid literal for int() with base 10: '>\r\n\t<head>\r\n\t\t<meta charset="UTF-8">\r\n\t\t<title>页面丢失了</title>\r\n\t\t<meta name="viewport" content="width=device-width, initial-scale=1.0, user-scalable=no, minimum-scale=1.0, maximum-scale

>>>

|

-

|

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)