|

|

马上注册,结交更多好友,享用更多功能^_^

您需要 登录 才可以下载或查看,没有账号?立即注册

x

这些天捣鼓多进程,发现有个绕不过去的弯,就是多线程的顺序输出问题。

简单来说,就是开启了多进程爬虫后,可让不同线程同时抓取网页,但是返回的数据顺序并不是按照网页排列的顺序执行。

请问一下有什么好的解决办法吗?

以豆瓣Top250电影为例,写了如下代码:

- import requests

- from bs4 import BeautifulSoup

- from multiprocessing import Pool

- import time

- '''解析内容,并创建多线程执行,存入CSV文档中'''

- hds = {'User-Agent': 'ozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/50.0.2661.102 Safari/537.36'}

- def scraper(url):

- r = requests.get(url, headers=hds)

- #print(r.status_code)

- soup = BeautifulSoup(r.text, 'lxml')

- infos = soup.select("div.item")

- for info in infos:

- num = info.select('div.pic em')[0].text

- name = info.span.text ##选择info里面首次出现的span标签

- rating = info.select("span.rating_num")[0].text

- try:

- comment = info.select("span.inq")[0].text.replace("\u22ef", "")

- except:

- comment = "【空】"

- #print(num,name,rating,comment)

- with open("movie_top250_3.csv", "a") as f:

- f.write("{},{},{},{}\n".format(num, name, rating, comment))

- if __name__ == '__main__': #多进程必须使用此语句,否则报错

-

- '''构建网页列表并将其入队'''

- url_list = ["https://movie.douban.com/top250?start={}&filter=".format(str(i*25)) for i in range(0, 10)]

- #print(url_list)

-

- start1 = time.time()

- for url in url_list:

- scraper(url)

- stop1 = time.time()

-

- print("单线程耗时", stop1 - start1)

- start2 = time.time()

- pool = Pool(processes=2)

- pool.map(scraper,url_list)

- stop2 = time.time()

- print("2个进程耗时", stop2 - start2)

- start3 = time.time()

- pool = Pool(processes=2)

- pool.map(scraper,url_list)

- stop3 = time.time()

- print("4个进程耗时", stop3 - start3)

结果:

- 耗时 4.628264665603638

- 耗时 3.9972286224365234

- 耗时 3.8952226638793945

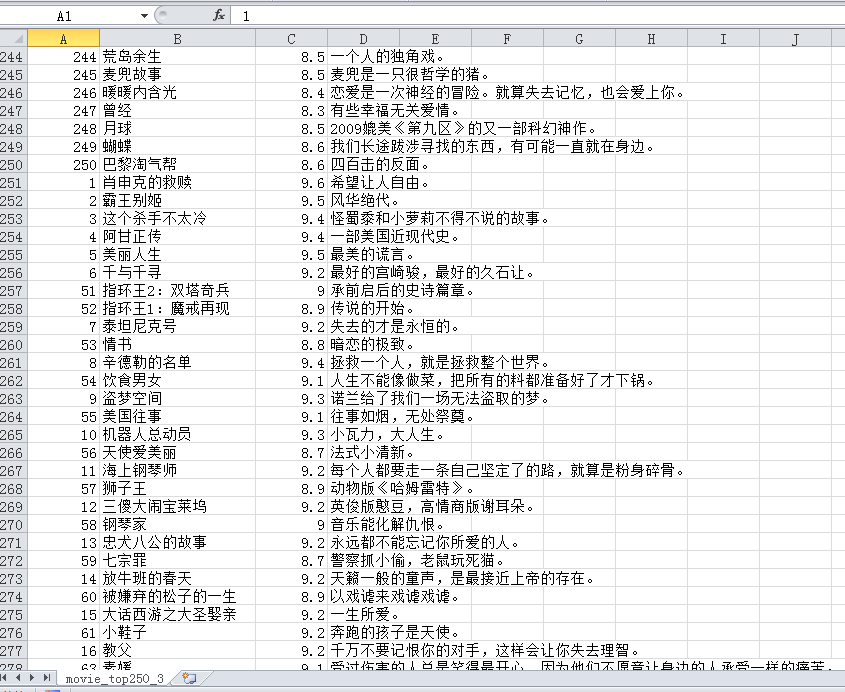

文件截图:

单线程的输出顺序没问题,后面的2进程和4进程顺序不确定。

|

|

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)