|

|

马上注册,结交更多好友,享用更多功能^_^

您需要 登录 才可以下载或查看,没有账号?立即注册

x

本帖最后由 瓜子仁 于 2018-3-13 19:15 编辑

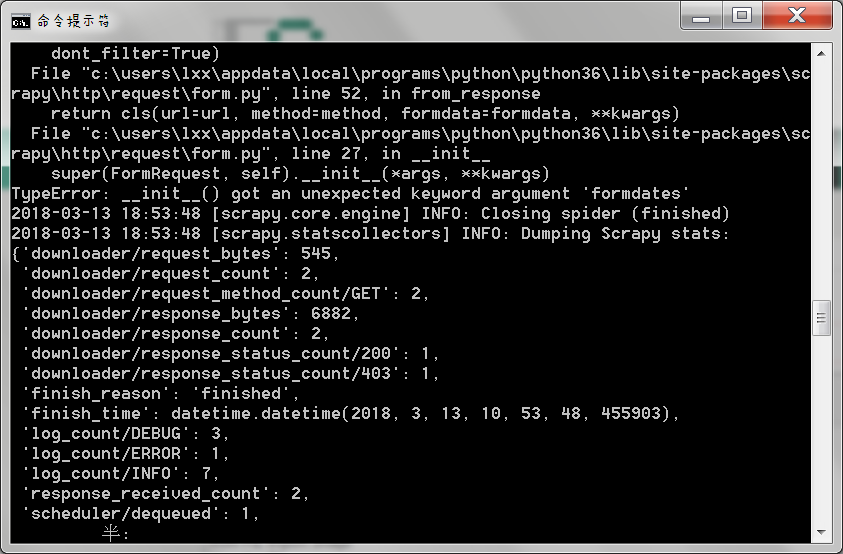

代码的目的是模拟登录豆瓣,前面的代码测试过,估计问题出现在64行的return语句上,

cmd运行提示:

super(FormRequest, self).__init__(*args, **kwargs)

TypeError: __init__() got an unexpected keyword argument 'formdates'

spider代码如下:

- import scrapy

- from PIL import Image

- import urllib

- class doubanloginspider(scrapy.Spider):

- name = 'doubanlogin'

- allowed_domains = ['douban.com']

- #start_url = ['http://www.douban.com/']

- headers = {"User-Agent":

- "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/55.0.2883.87 UBrowser/6.2.3964.2 Safari/537.36"}

- '''请求登录界面'''

- def start_requests(self):

- print('请求登录界面')

- return [scrapy.FormRequest(url = "https://accounts.douban.com/login",

- headers =self.headers ,

- meta = {"cookiejar":1},

- callback = self.parse_before,

- dont_filter=True)

- ]

- '''填写登录表单,查看验证码'''

- def parse_before(self,response):

- print("登录前表单填充")

- captcha_id = response.xpath('//input[@type="hidden"]/@value').extract_first()

- captcha_image_url = response.xpath('//img[@id="captcha_image"]/@src').extract_first()

- if captcha_image_url is None:

- print("无需验证码")

- formdate = {'source':'index_nav',

- 'redir':'https://www.douban.com',

- 'form_email':'***',

- 'form_password':'***',

- 'login':'登录'

- }

- else:

- print('需要验证码')

- save_imag_path = "C:\\Users\lxx\Desktop\douban\douban\spiders\captcha.jpeg"

- #urllib.request.urlretrieve(captcha_image_url,save_imag_path)

- #urlopen = urllib.URLopener()

- fp = urllib.request.urlopen(captcha_image_url)

- data = fp.read()

- f = open(save_imag_path + '1.jpeg', 'wb')

- f.write(data)

- f.close()

- print("1 open!",captcha_image_url) #图片地址正确

- captcha_solution = input("请根据图片输入验证码")

- print("打印")

- formdate = {'source':'None',

- 'redir':'https://www.douban.com',

- 'form_email':'***',

- 'form_password':'***',

- 'captcha-solution':captcha_solution,

- 'captcha-id':captcha_id,

- 'login':'登录'

- }

- print("表单提交,登录中。。。")

- return scrapy.FormRequest.from_response(response,meta = {"cookiejar":response.meta["cookiejar"]},

- headers = self.headers,formdate = formdate,callback = self.parse_after,

- dont_filter=True)

- def parse_after(self,response):

- account = response.xpath('//a[@target="_blank"]/span/text()').extract_first()

- if account is None:

- print("登陆失败")

- else:

- print("登录成功")

|

-

cmd代码

|

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)