|

|

马上注册,结交更多好友,享用更多功能^_^

您需要 登录 才可以下载或查看,没有账号?立即注册

x

本帖最后由 wongyusing 于 2018-3-28 22:05 编辑

问题:

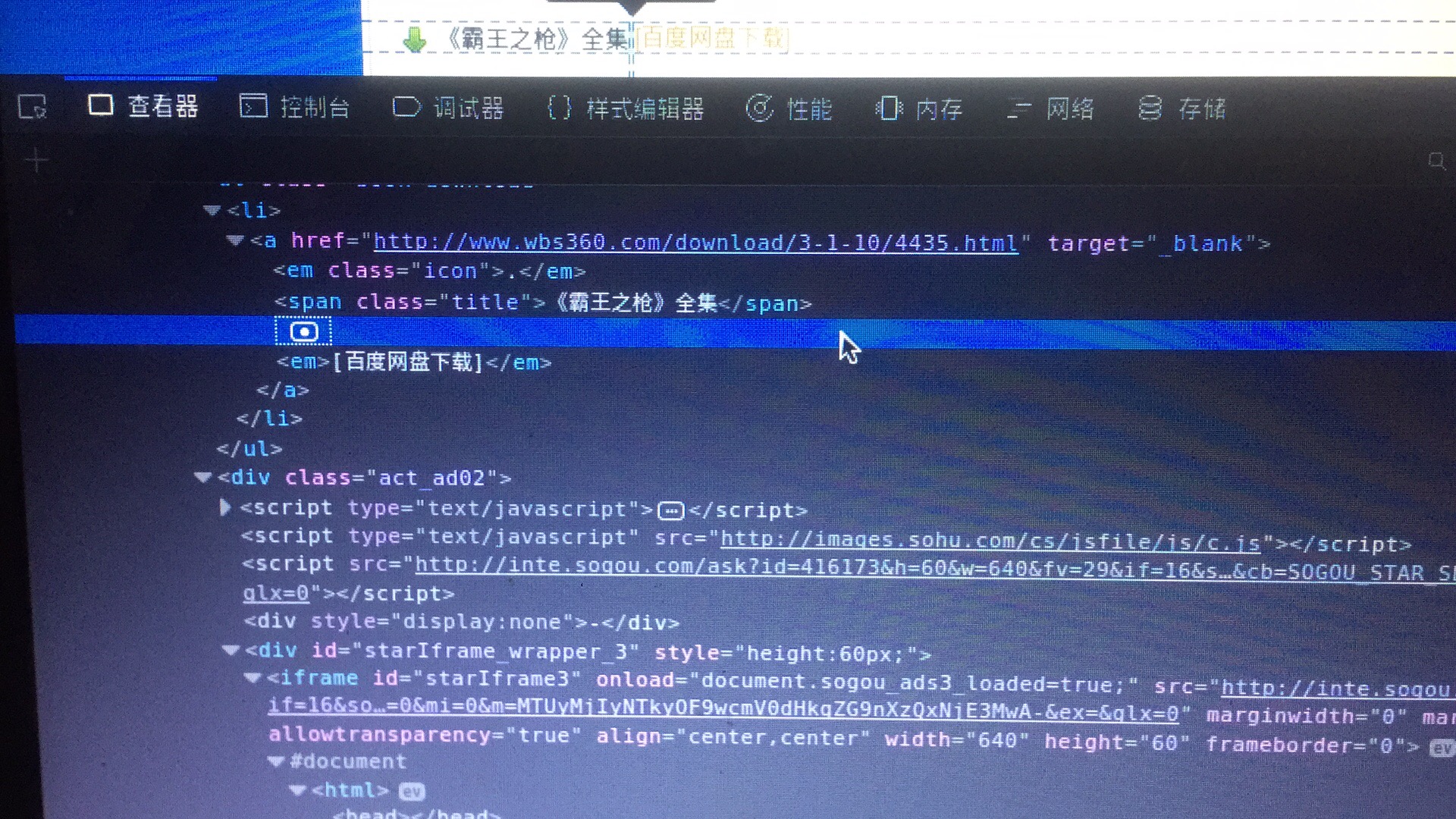

1.遇到想下图这种超链接该怎么获取,图中的“百度网盘”是一个网址,但是无法得到具体链接,该如何处理?

截图1

2.迭代问题,代码如下:

- # -*- coding: utf-8 -*-

- import scrapy

- from scrapy.http import Request

- class GogoSpider(scrapy.Spider):

- name = 'gogo'

- allowed_domains = ['wbs360.com']

- start_urls = ['http://www.wbs360.com/books/']

- #http://www.wbs360.com/books/?p-1.html

- def find_all_url(self):#该网站有页面“书库”是可以获取所有书籍的URL

- url_0 = 'www.wbs360.com/books/?p-'

- url_1 = '.html'

- #经观察得知该网站小说详情页的url后缀是465至4435。共3970本

- #但经计算该站小说只有3956,中间的14本为无效链接或者是反爬虫机制设立的坑

- #故此不考虑拼接,只从页面获取所有小说的url

- for num in range(1,133):#由实验得出该网站有132页,加一

- url_2 = url_0 + str(num) + url_1#拼接url

- print(url_2)

- yield Request(url_2, callback=self.parse)

- #问题出在这里‘find_all_url’函数迭代出的结果无法传入到parse函数里

- #parse函数只会导入start_urls的url。

- def parse(self, response):

- #print(response.text)

- sel = scrapy.selector.Selector(response)

- sites = sel.xpath('//*[@id="book"]/div/div[3]/ul[2]/li')

- for site in sites:

- detail = site.xpath('a/@href').extract()#获取小说的详情页链接

- print(detail)

item文件

- # -*- coding: utf-8 -*-

- # Define here the models for your scraped items

- #

- # See documentation in:

- # https://doc.scrapy.org/en/latest/topics/items.html

- import scrapy

- class Book0Item(scrapy.Item):

- # define the fields for your item here like:

- # name = scrapy.Field()

- sort = scrapy.Field() #分类

- title = scrapy.Field() #小说名

- author = scrapy.Field()#作者名

- link = scrapy.Field() #下载地址

shell信息

- 2018-03-28 16:20:30 [scrapy.utils.log] INFO: Scrapy 1.5.0 started (bot: book_0)

- 2018-03-28 16:20:30 [scrapy.utils.log] INFO: Versions: lxml 4.2.1.0, libxml2 2.9.8, cssselect 1.0.3, parsel 1.4.0, w3lib 1.19.0, Twisted 17.9.0, Python 3.5.2 (default, Nov 23 2017, 16:37:01) - [GCC 5.4.0 20160609], pyOpenSSL 17.5.0 (OpenSSL 1.1.0h 27 Mar 2018), cryptography 2.2.2, Platform Linux-4.10.0-28-generic-x86_64-with-Ubuntu-16.04-xenial

- 2018-03-28 16:20:30 [scrapy.crawler] INFO: Overridden settings: {'TELNETCONSOLE_ENABLED': False, 'BOT_NAME': 'book_0', 'NEWSPIDER_MODULE': 'book_0.spiders', 'SPIDER_MODULES': ['book_0.spiders']}

- 2018-03-28 16:20:30 [scrapy.middleware] INFO: Enabled extensions:

- ['scrapy.extensions.logstats.LogStats',

- 'scrapy.extensions.corestats.CoreStats',

- 'scrapy.extensions.memusage.MemoryUsage']

- 2018-03-28 16:20:30 [scrapy.middleware] INFO: Enabled downloader middlewares:

- ['scrapy.downloadermiddlewares.httpauth.HttpAuthMiddleware',

- 'scrapy.downloadermiddlewares.downloadtimeout.DownloadTimeoutMiddleware',

- 'scrapy.downloadermiddlewares.defaultheaders.DefaultHeadersMiddleware',

- 'scrapy.downloadermiddlewares.useragent.UserAgentMiddleware',

- 'scrapy.downloadermiddlewares.retry.RetryMiddleware',

- 'scrapy.downloadermiddlewares.redirect.MetaRefreshMiddleware',

- 'scrapy.downloadermiddlewares.httpcompression.HttpCompressionMiddleware',

- 'scrapy.downloadermiddlewares.redirect.RedirectMiddleware',

- 'scrapy.downloadermiddlewares.cookies.CookiesMiddleware',

- 'scrapy.downloadermiddlewares.httpproxy.HttpProxyMiddleware',

- 'scrapy.downloadermiddlewares.stats.DownloaderStats']

- 2018-03-28 16:20:30 [scrapy.middleware] INFO: Enabled spider middlewares:

- ['scrapy.spidermiddlewares.httperror.HttpErrorMiddleware',

- 'scrapy.spidermiddlewares.offsite.OffsiteMiddleware',

- 'scrapy.spidermiddlewares.referer.RefererMiddleware',

- 'scrapy.spidermiddlewares.urllength.UrlLengthMiddleware',

- 'scrapy.spidermiddlewares.depth.DepthMiddleware']

- 2018-03-28 16:20:30 [scrapy.middleware] INFO: Enabled item pipelines:

- []

- 2018-03-28 16:20:30 [scrapy.core.engine] INFO: Spider opened

- 2018-03-28 16:20:30 [scrapy.extensions.logstats] INFO: Crawled 0 pages (at 0 pages/min), scraped 0 items (at 0 items/min)

- 2018-03-28 16:20:31 [scrapy.core.engine] DEBUG: Crawled (200) <GET http://www.wbs360.com/books/> (referer: None)

- ['http://www.wbs360.com/book/4435.html']

- ['http://www.wbs360.com/book/4434.html']

- ['http://www.wbs360.com/book/4433.html']

- ['http://www.wbs360.com/book/4432.html']

- ['http://www.wbs360.com/book/4431.html']

- ['http://www.wbs360.com/book/4430.html']

- ['http://www.wbs360.com/book/4429.html']

- ['http://www.wbs360.com/book/4428.html']

- ['http://www.wbs360.com/book/4427.html']

- ['http://www.wbs360.com/book/4426.html']

- ['http://www.wbs360.com/book/4425.html']

- ['http://www.wbs360.com/book/4424.html']

- ['http://www.wbs360.com/book/4423.html']

- ['http://www.wbs360.com/book/4422.html']

- ['http://www.wbs360.com/book/4421.html']

- ['http://www.wbs360.com/book/4420.html']

- ['http://www.wbs360.com/book/4419.html']

- ['http://www.wbs360.com/book/4418.html']

- ['http://www.wbs360.com/book/4417.html']

- ['http://www.wbs360.com/book/4416.html']

- ['http://www.wbs360.com/book/4415.html']

- ['http://www.wbs360.com/book/4414.html']

- ['http://www.wbs360.com/book/4413.html']

- ['http://www.wbs360.com/book/4412.html']

- ['http://www.wbs360.com/book/4411.html']

- ['http://www.wbs360.com/book/4410.html']

- ['http://www.wbs360.com/book/4409.html']

- ['http://www.wbs360.com/book/4408.html']

- ['http://www.wbs360.com/book/4407.html']

- ['http://www.wbs360.com/book/4406.html']

- 2018-03-28 16:20:31 [scrapy.core.engine] INFO: Closing spider (finished)

- 2018-03-28 16:20:31 [scrapy.statscollectors] INFO: Dumping Scrapy stats:

- {'downloader/request_bytes': 219,

- 'downloader/request_count': 1,

- 'downloader/request_method_count/GET': 1,

- 'downloader/response_bytes': 4724,

- 'downloader/response_count': 1,

- 'downloader/response_status_count/200': 1,

- 'finish_reason': 'finished',

- 'finish_time': datetime.datetime(2018, 3, 28, 8, 20, 31, 157313),

- 'log_count/DEBUG': 1,

- 'log_count/INFO': 7,

- 'memusage/max': 740130816,

- 'memusage/startup': 740130816,

- 'response_received_count': 1,

- 'scheduler/dequeued': 1,

- 'scheduler/dequeued/memory': 1,

- 'scheduler/enqueued': 1,

- 'scheduler/enqueued/memory': 1,

- 'start_time': datetime.datetime(2018, 3, 28, 8, 20, 30, 664665)}

- 2018-03-28 16:20:31 [scrapy.core.engine] INFO: Spider closed (finished)

文件地址链接: https://pan.baidu.com/s/1eIN6z_umvADds0O0-dSSig 密码: pjh6 |

|

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)