|

|

马上注册,结交更多好友,享用更多功能^_^

您需要 登录 才可以下载或查看,没有账号?立即注册

x

import requests

import bs4

#打开网页

def open_url(url):

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; rv:61.0) Gecko/20100101 Firefox/61.0'

}

res = requests.get(url,headers = headers)

return res

#找到影评的标签

def find_comments(res):

soup = bs4.BeautifulSoup(res.text,'html.parser')

comments = []

targets = soup.find_all('div',class_ = 'short-content')

for each in targets:

comments.append(each.text.strip()+'\n')

return comments

#获取影评的页数

def find_depth(res):

soup = bs4.BeautifulSoup(res.text,'html.parser')

depth = soup.find('span',class_ = 'next').previous_sibling.previous_sibling.text

return int(depth)

#获取电影的编号

def get_name_id(host):

name_id = host.split('/')[4]

return str(name_id)

def main():

host = 'https://movie.douban.com/subject/{}/reviews'.format(get_name_id('https://movie.douban.com/subject/26322774/reviews'))

res = open_url(host)

depth = find_depth(res)

results = []

for i in range(depth):

url = host + '?start=' + str(i*20)

res = open_url(url)

comments = find_comments(res)

results.extend(comments)

with open('逐梦演艺圈评论.txt','w',encoding='utf-8') as f:

for each in results:

f.write(each)

if __name__ =='__main__':

main()

这个主要是模仿的小甲鱼的爬取豆瓣top250的例子

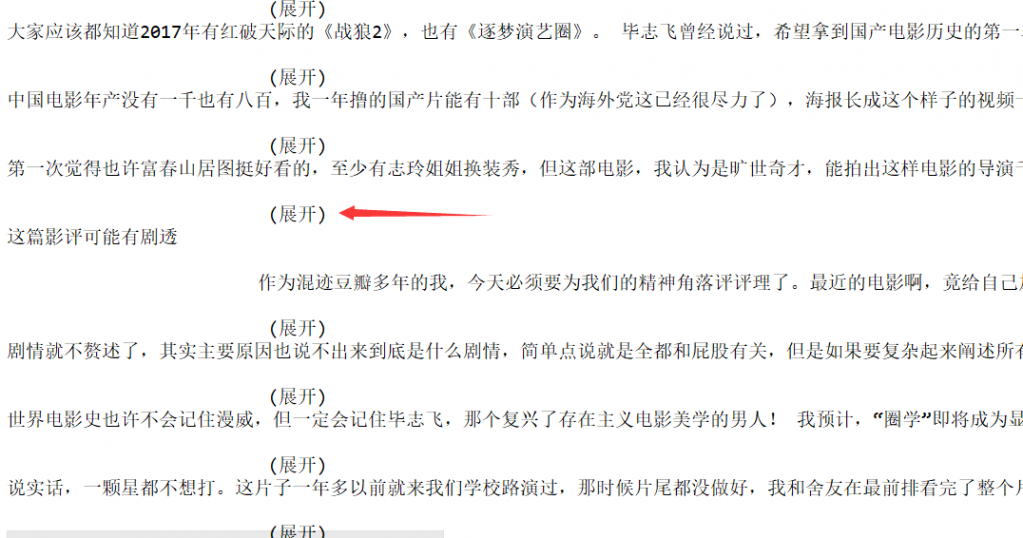

现在就是爬取结果是图片那个样子

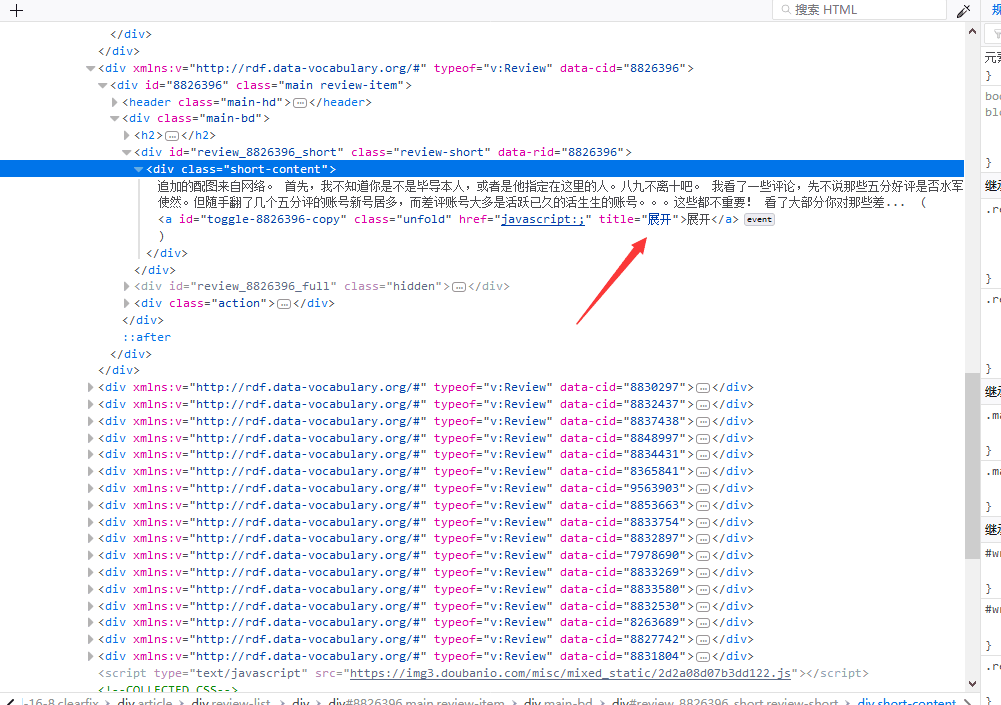

主要是第二张图的那个展开怎么处理呀

或者说下这个问题叫什么呀我也好去百度 不过要是能解决那最好了

求大神指导呀

|

-

-

|

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)