|

|

马上注册,结交更多好友,享用更多功能^_^

您需要 登录 才可以下载或查看,没有账号?立即注册

x

本帖最后由 快速收敛 于 2019-1-18 08:38 编辑

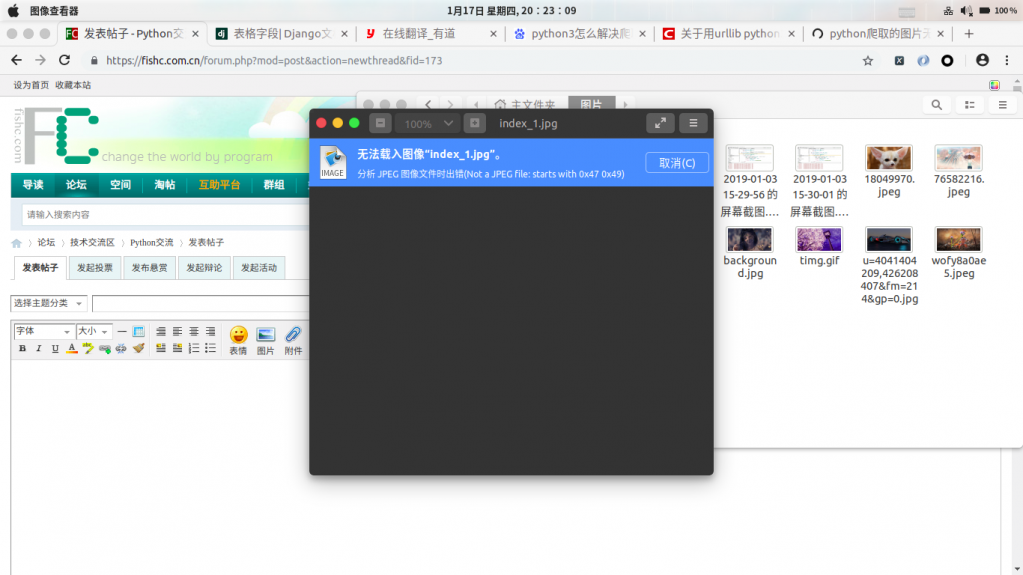

如图,爬虫下载图片无法加载,检查过后缀名没错.同一章节其他某些图片正常,请问大神是什么原因造成的?

- import os

- import re

- import requests

- import urllib.request,urllib.parse

- from fake_useragent import UserAgent

- from lxml import etree

- from queue import Queue

- from threading import Thread

- class Producers(Thread):

- def __init__(self,producer,consumer,*args,**kwargs):

- super().__init__()

- self.producer = producer

- self.consumer = consumer

- def run(self):

- while True:

- producer_url = self.producer.get()

- if self.producer.empty():

- print("生产完成")

- break

- count = 0

- while True:

- image_name = "index_%d.jpg" % count

- parse_url = producer_url + "index_%d.html" % count

- # print(parse_url)

- response = requests.get(parse_url,headers=HEADERS, proxies=PROXY)

- if response.status_code == 500: break

- parse_data = re.findall(r"<script\stype.*?>var\sTitle=.*?</script>",response.text)[0]

- chapter = re.findall(r"火\w+",parse_data)[0]

- try:

- image_date = re.findall(r'mhurl="(.*?)"',parse_data)[0]

- except Exception:

- continue

- if '2017' not in image_date and '2016' not in image_date and '2018' not in image_date and '2019' not in image_date:

- image_url = "http://p0.xiaoshidi.net/" + image_date

- else:

- image_url = "http://p1.xiaoshidi.net/" + image_date

- count += 1

- # print(image_url)

- self.consumer.put((image_url,chapter,image_name))

- class Consumers(Thread):

- def __init__(self,producer,consumer,*args,**kwargs):

- super().__init__()

- self.producer = producer

- self.consumer = consumer

- def run(self):

- while True:

- image_url,chapter,image_name = self.consumer.get()

- if self.consumer.empty() and self.producer.empty():

- print("总程序下载完成")

- break

- if not os.path.exists(chapter):

- os.mkdir(chapter)

- filename = chapter + "/" +image_name

- try:

- urllib.request.urlretrieve(image_url,filename)

- print("%s第%s下载完毕" % (chapter,image_name))

- except Exception:

- continue

- def main():

- producer = Queue(600)

- consumer = Queue(2000)

- response = requests.get(BASE_URL, headers=HEADERS)

- # html = etree.HTML(response.text)

- page_url = [BASE_URL + urllib.parse.quote(url[1:-1]) for url in re.findall(r"<a.href=(\S+).title=.*?",response.text)]

- for url in page_url:

- producer.put(url)

- for i in range(5):

- p = Producers(producer,consumer)

- p.start()

- for i in range(10):

- c = Consumers(producer, consumer)

- c.start()

- if __name__ == '__main__':

- BASE_URL = "https://manhua.fzdm.com/1/"

- UA = UserAgent(verify_ssl=False)

- HEADERS = {"User-Agent": UA.random}

- PROXY = {

- "http": "111.177.178.217:9999",

- "http": "113.128.30.28:9999",

- "http": "117.70.38.149:9999",

- "http": "121.233.251.4:9999",

- }

- main()

这是代码,下载火影漫画,图片在网站都能单独打开,但是不知道为啥,下载下来章节部分不能显示,检查过图片网址也是正确的啊

章节图片哪里是减一

假如图片是2018的话,二级域名是17

2019年的话,二级域名是18.

你的代码没看错的话,应该是没有这里的写法。

看下面的js代码

- <script type="text/javascript">var Title="海贼王922话";var Clid="2";var mhurl="2018/10/26055707481977.jpg";var Url="922";var nexturl="923";var CTitle="海贼王";var mhss=getCookie("picHost");if(mhss==""){mhss="p1.xiaoshidi.net"}if(mhurl.indexOf("2016")==-1&&mhurl.indexOf("2017")==-1&&mhurl.indexOf("2018")==-1&&mhurl.indexOf("2019")==-1){mhss="p0.xiaoshidi.net"}var mhpicurl=mhss+"/"+mhurl;if(mhurl.indexOf("http")!=-1){mhpicurl=mhurl}function nofind(){var e=event.srcElement?event.srcElement:event.target;e.src="http://p1.xiaoshidi.net/"+mhurl;var i=new Date;i.setTime(i.getTime()-1);document.cookie="picHost=0;path=/;domain=fzdm.com;expires="+i.toGMTString();e.onerror=null;toastr.success("已更换为最快服务器~",{timeOut:5e3})} ;document.write('<img src="http://'+mhpicurl+'" width="0" height="0" />'); </script>

|

-

|

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)