|

|

马上注册,结交更多好友,享用更多功能^_^

您需要 登录 才可以下载或查看,没有账号?立即注册

x

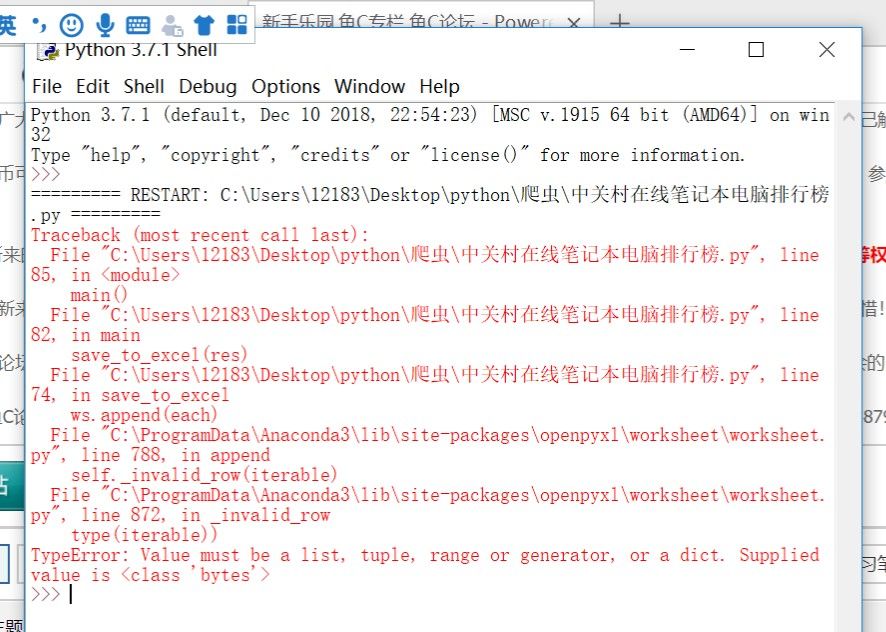

练习爬虫中关村在线排名网站,导出EXCEL时报错,我应该怎么修改?

附代码:

import requests

import bs4

import re

import openpyxl

def open_url(url):

# 使用代理

# proxies = {"http": "127.0.0.1:1080", "https": "127.0.0.1:1080"}

headers = {'user-agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/57.0.2987.98 Safari/537.36'}

# res = requests.get(url, headers=headers, proxies=proxies)

res = requests.get(url, headers=headers)

return res

def find_notbook(res):

soup = bs4.beautifulsoup(res.text,'html.parser')

#排名

rank = []

targets = soup.find_all("div",class_="rank-list__cell cell-1")

for each in targets:

rank.append(each.text)

#型号

model = []

targets = soup.find_all("div",class_="rank-list__cell cell-3")

for each in targets:

model.append(each.text)

#价格

price = []

targets = soup.find_all("div",class_="rank-list__cell cell-4")

for each in targets:

price.appen(each.text)

#热度

hot = []

targets = soup.find_all("div",class_="rank-list__cell cell-5")

for each in targets:

hot.appen(each.text)

#评分

grade = []

targets = soup.find_all("div",class_="rank-list__cell cell-6")

for each in targets:

grade.appen(each.text)

result = []

length = len(rank)

for i in range(length):

result.append([rank[i], model[i], price[i],hot[i],grade[i]])

return result

'''

# 找出一共有多少个页面

def find_depth(res):

soup = bs4.BeautifulSoup(res.text, 'html.parser')

depth = soup.find('span', class_='next').previous_sibling.previous_sibling.text

return int(depth)

'''

def save_to_excel(result):

wb = openpyxl.Workbook()

ws = wb.active

ws['A1'] = "排名"

ws['B1'] = "型号"

ws['C1'] = "价格"

ws['D1'] = "热度"

ws['E1'] = "评分"

for each in result:

ws.append(each)

wb.save("中关村笔记本电脑排行榜.xlsx")

def main():

host = "http://top.zol.com.cn/compositor/16/notebook.html"

res = open_url(host)

save_to_excel(res)

if __name__ == "__main__":

main()

第一,你根本就没有调用find_notbook(res)这个函数去获取信息

第二,你写的爬虫部分不完善,以爬取排名部分为例,rank这个list,你把‘排名’这个字符串放进去了,而且,排名为1的那个,网站上是个图片,你获取到的文字是空的,就加了一个空字符串到rank里面,这些问题都是要先处理一下的,不能直接拿来就用

第三,有不少细节问题,函数没写全等等的

import requests

import bs4

import re

import openpyxl

def open_url(url):

# 使用代理

# proxies = {"http": "127.0.0.1:1080", "https": "127.0.0.1:1080"}

headers = {'user-agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/57.0.2987.98 Safari/537.36'}

# res = requests.get(url, headers=headers, proxies=proxies)

res = requests.get(url, headers=headers)

return res

def find_notbook(res):

soup = bs4.BeautifulSoup(res.text,'html.parser')

#排名

rank = []

targets = soup.find_all("div",class_="rank-list__cell cell-1")

for each in targets:

rank.append(each.text)

#型号

model = []

targets = soup.find_all("div",class_="rank-list__cell cell-3")

for each in targets:

model.append(each.text)

#价格

price = []

targets = soup.find_all("div",class_="rank-list__cell cell-4")

for each in targets:

price.append(each.text)

#热度

hot = []

targets = soup.find_all("div",class_="rank-list__cell cell-5")

for each in targets:

hot.append(each.text)

#评分

grade = []

targets = soup.find_all("div",class_="rank-list__cell cell-6")

for each in targets:

grade.append(each.text)

result = []

length = len(rank)

for i in range(length):

result.append([rank[i], model[i], price[i],hot[i],grade[i]])

print(result)

return result

'''

# 找出一共有多少个页面

def find_depth(res):

soup = bs4.BeautifulSoup(res.text, 'html.parser')

depth = soup.find('span', class_='next').previous_sibling.previous_sibling.text

return int(depth)

'''

def save_to_excel(result):

wb = openpyxl.Workbook()

ws = wb.active

ws['A1'] = "排名"

ws['B1'] = "型号"

ws['C1'] = "价格"

ws['D1'] = "热度"

ws['E1'] = "评分"

for each in result:

print(each)

ws.append(each)

wb.save("中关村笔记本电脑排行榜.xlsx")

def main():

host = "http://top.zol.com.cn/compositor/16/notebook.html"

res = open_url(host)

res0 = find_notbook(res)

save_to_excel(res0)

if __name__ == "__main__":

main()

|

-

|

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)