|

|

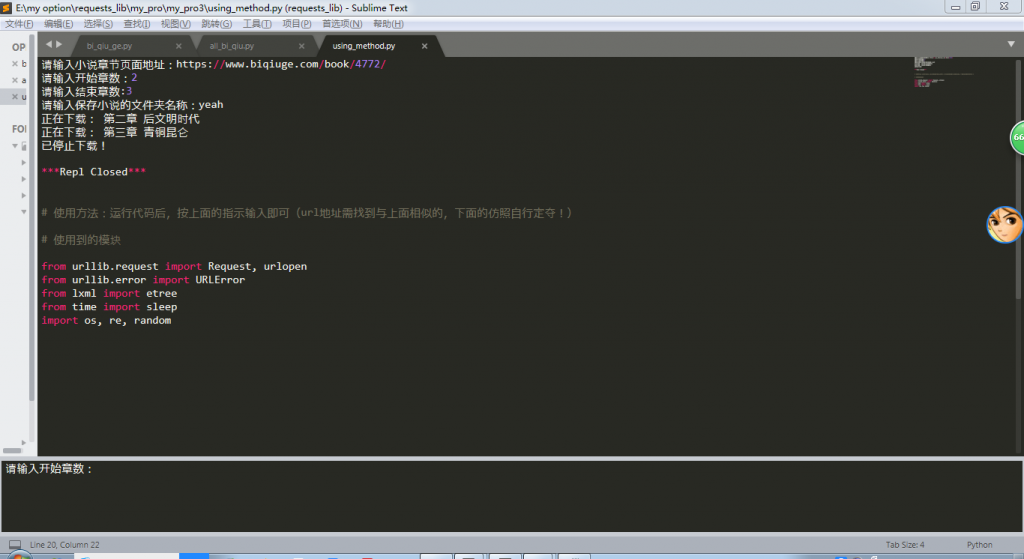

ТэЩЯзЂВсЃЌНсНЛИќЖрКУгбЃЌЯэгУИќЖрЙІФм^_^

ФњашвЊ ЕЧТМ ВХПЩвдЯТдиЛђВщПДЃЌУЛгаеЫКХЃПСЂМДзЂВс

x

- # ЭјеОЕижЗЃКhttps://www.biqiuge.com/ ЃЈБЪШЄИѓЃЉ

- from urllib.request import Request, urlopen

- from urllib.error import URLError

- from lxml import etree

- from time import sleep

- import os, re, random

- # ЛёШЁhtml

- def get_html(url):

- headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/74.0.3729.157 Safari/537.36'}

- req = Request(url, headers=headers)

- try:

- response = urlopen(req)

- except URLError as e:

- if hasattr(e, "reason"):

- print("We failed to reach a server.")

- print("Reason:", e.reason)

- elif hasattr(e, "code"):

- print("The server couldn\'t fulfill the request.")

- print("Error code:", e.code)

- else:

- html = response.read()

- return html

- def get_all_chapter(html):

-

- base_url = "https://www.biqiuge.com"

- start = int(input("ЧыЪфШыПЊЪМеТЪ§ЃК"))

- end = int(input("ЧыЪфШыНсЪјеТЪ§:"))

- html_x = etree.HTML(html)

- chapter_title = html_x.xpath('//div[@class="listmain"]//dd[position() > 6]/a/text()')

- chapter_title = chapter_title[start-1:end]

- all_chapter = html_x.xpath('//div[@class="listmain"]//dd[position() > 6]/a/@href')

- #СаБэЭЦЕМЪН ЭъећЛЏеТНкurl

- each_chapter_url = [base_url+suffix for suffix in all_chapter]

- each_chapter_url = each_chapter_url[start-1:end]

- return [each_chapter_url,chapter_title]

-

- # ЛёШЁеТНкФкШнВЂБЃДц

- def get_content_and_save(all_chapter):

-

- files = input("ЧыЪфШыБЃДцаЁЫЕЕФЮФМўМаУћГЦЃК")

- os.mkdir(files)

- counter = 0

- for each_chapter_url in all_chapter[0]:

- html = get_html(each_chapter_url)

- html = html.decode("gbk")

- tree = etree.HTML(html)

- # ЛёШЁеТНкФкШнЕНСаБэ

- fiction_content = tree.xpath('//div[@id="content"]/text()')

- # гХЛЏЛёШЁеТНкФкШн

- text_content = ""

- for each_line_content in fiction_content:

- text_content += each_line_content

- beautiful_text = text_content.replace("\r", "\n").replace(" ","")

- # БЃДц

- print("е§дкЯТдиЃК", all_chapter[1][counter])

- with open("./" + files + "/" + all_chapter[1][counter] + ".txt", "w", encoding="utf-8") as f:

- f.write(beautiful_text)

- counter += 1

- wait_time = random.choice([3, 4, 5, 6])

- sleep(wait_time)

- # жїКЏЪ§

- def get_fictions(url):

- html = get_html(url)

- html = html.decode("gbk")

- all_chapter = get_all_chapter(html)

- get_content_and_save(all_chapter)

-

- if __name__ == "__main__":

- url = input("ЧыЪфШыаЁЫЕеТНквГУцЕижЗЃК") # Р§ШчЯТУцИёЪНЕФurl

- # url = "https://www.biqiuge.com/book/24277/"

- # url = "https://www.biqiuge.com/book/4772/"

- get_fictions(url)

- print("вбЭЃжЙЯТдиЃЁ")

|

|

( дСICPБИ18085999КХ-1 | дСЙЋЭјАВБИ 44051102000585КХ)

( дСICPБИ18085999КХ-1 | дСЙЋЭјАВБИ 44051102000585КХ)