|

|

马上注册,结交更多好友,享用更多功能^_^

您需要 登录 才可以下载或查看,没有账号?立即注册

x

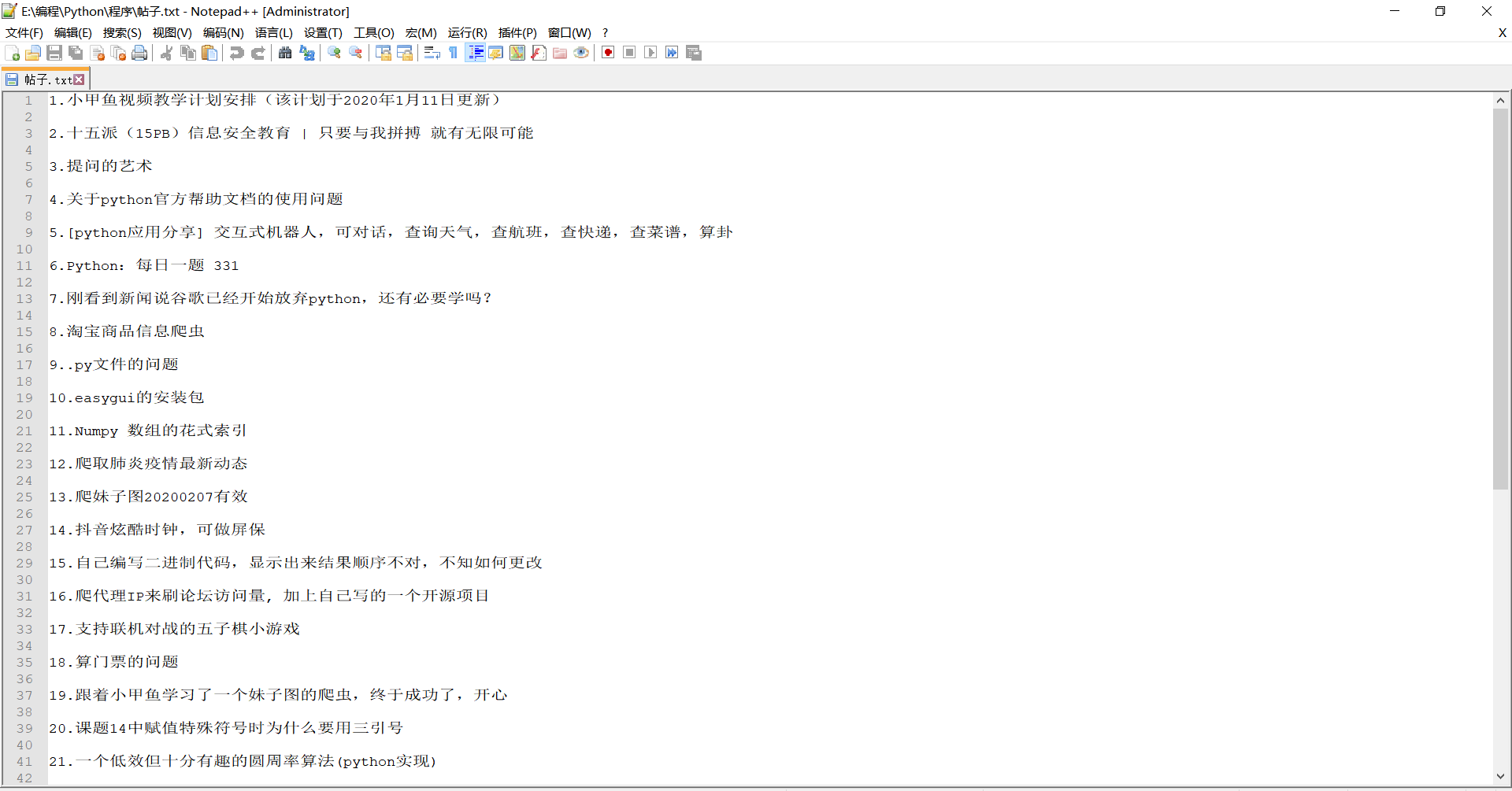

这是原本实现效果:

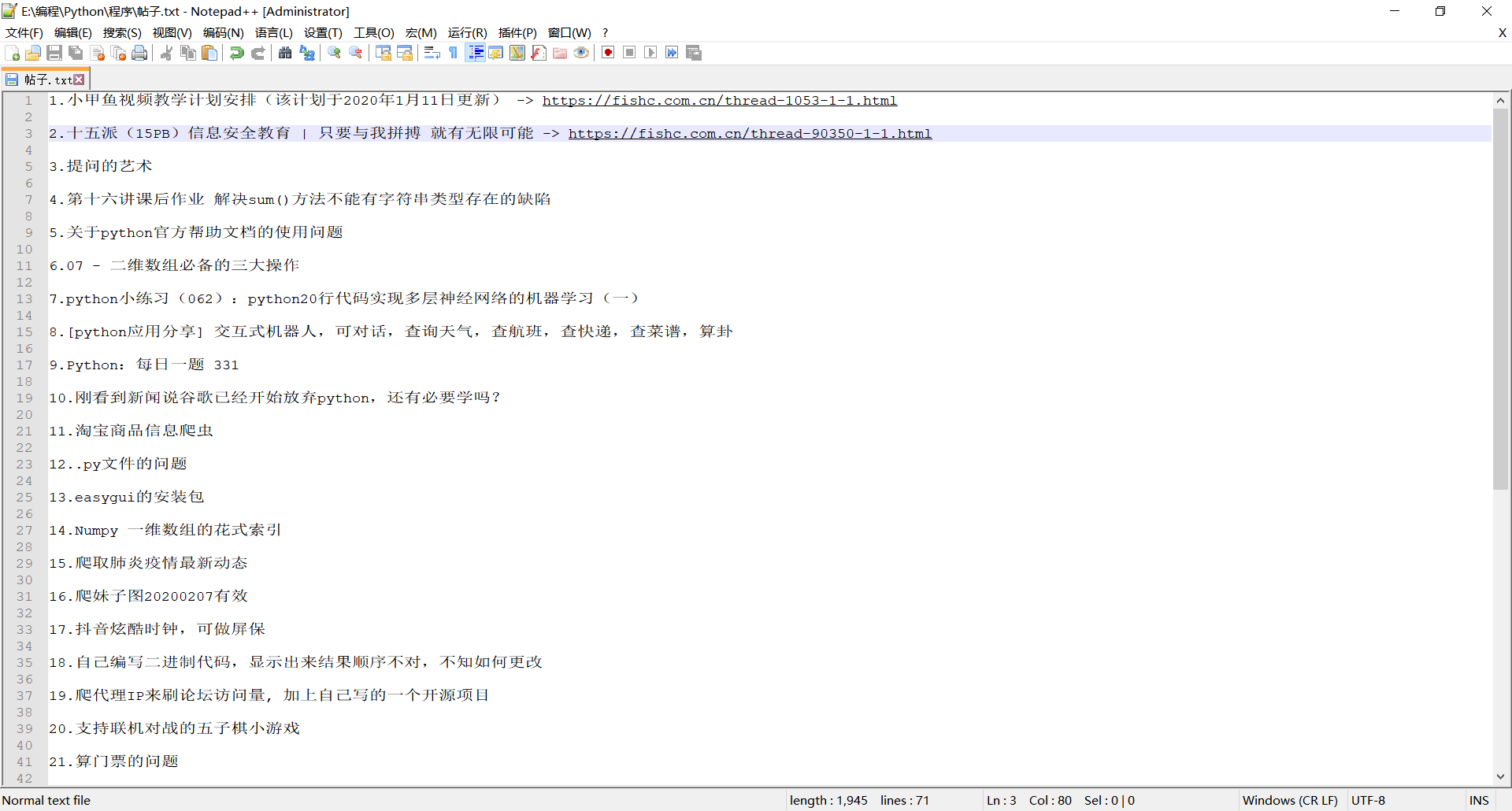

这是我想要的实现效果:

就是在原本的实现效果后面增加帖子的链接,我搞来搞去还是高不出这种效果,大家帮我看看怎么才能实现这种效果,谢谢。

以下是代码:

- import requests

- import bs4

- import re

- def return_last(res):

- soup = bs4.BeautifulSoup(res.text, "html.parser")

- content = soup.find("a", class_="last")

- return content

- def write(content, num=1):

- file = open("帖子.txt", "a", encoding="utf-8")

-

- for each in content:

- file.write(str(num)+"."+each.text+"\n\n")

- num += 1

- file.write("-" * 50+"\n\n")

- file.close()

- return num

- def find_data(res):

- soup = bs4.BeautifulSoup(res.text, "html.parser")

- content = soup.find_all(class_="s xst")

- return content

- def open_url(url):

- headers = {"user-agent" : "Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/75.0.3770.100 Safari/537.36"}

- res = requests.get(url, headers=headers)

- return res

-

- def main():

- # 用于清除文件里面的内容

- with open("帖子.txt", "w", encoding="utf-8"):

- pass

-

- url = input("请输入板块地址:")

- page = int(input('请输入爬取的页数("0"表示全部):'))

- if page == 0:

- res = open_url(url)

- content = return_last(res)

- p = re.compile("\d")

- page = int("".join(p.findall(content.text)))

- num = 1 # 用于翻页

- subject_num = 1

- new_url = url.split("-")

- new_url = new_url[0] + "-" + new_url[1] + "-" + str(num) + ".html"

- while num <= page:

- print("正在爬取:", new_url, sep="")

- res = open_url(new_url)

- content = find_data(res)

- subject_num = write(content, subject_num)

- num += 1

- new_url = url.split("-")

- new_url = new_url[0] + "-" + new_url[1] + "-" + str(num) + ".html"

- if __name__ == "__main__":

- main()

- import requests

- import bs4

- import re

- def return_last(res):

- soup = bs4.BeautifulSoup(res.text, "html.parser")

- content = soup.find("a", class_="last")

- return content

- def write(content, num=1):

- file = open("帖子.txt", "a", encoding="utf-8")

- for each in content:

- file.write(str(num) + ". " + each.text + " -> " + each["href"] + "\n\n")

- num += 1

- file.write("-" * 50 + "\n\n")

- file.close()

- return num

- def find_data(res):

- soup = bs4.BeautifulSoup(res.text, "html.parser")

- content = soup.find_all(class_="s xst")

- return content

- def open_url(url):

- headers = {

- "user-agent": "Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 "

- "(KHTML, like Gecko) Chrome/75.0.3770.100 Safari/537.36"}

- res = requests.get(url, headers=headers)

- return res

- def main():

- # 用于清除文件里面的内容

- with open("帖子.txt", "w", encoding="utf-8"):

- pass

- url = input("请输入板块地址:")

- page = int(input('请输入爬取的页数("0"表示全部):'))

- if page == 0:

- res = open_url(url)

- content = return_last(res)

- p = re.compile(r"\d")

- page = int("".join(p.findall(content.text)))

- num = 1 # 用于翻页

- subject_num = 1

- new_url = url.split("-")

- new_url = new_url[0] + "-" + new_url[1] + "-" + str(num) + ".html"

- while num <= page:

- print("正在爬取:", new_url, sep="")

- res = open_url(new_url)

- content = find_data(res)

- subject_num = write(content, subject_num)

- num += 1

- new_url = url.split("-")

- new_url = new_url[0] + "-" + new_url[1] + "-" + str(num) + ".html"

- if __name__ == "__main__":

- main()

|

|

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)