|

|

50鱼币

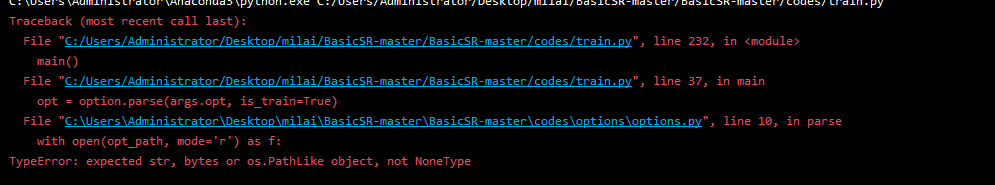

复现train.py的时候报错 我个人认为可能是ymal文件的路径没有被读取 去options.py设置完好像依然找不到路径 我在网上看的读ymal文件的代码写法和作者有点出入 所以不太懂了 希望大神们不吝赐教- import os

- import math

- import argparse

- import random

- import logging

- import torch

- import torch.distributed as dist

- import torch.multiprocessing as mp

- from data.data_sampler import DistIterSampler

- import options.options as option

- from utils import util

- from data import create_dataloader, create_dataset

- from models import create_model

- def init_dist(backend='nccl', **kwargs):

- ''' initialization for distributed training'''

- # if mp.get_start_method(allow_none=True) is None:

- if mp.get_start_method(allow_none=True) != 'spawn':

- mp.set_start_method('spawn')

- rank = int(os.environ['RANK'])

- num_gpus = torch.cuda.device_count()

- torch.cuda.set_device(rank % num_gpus)

- dist.init_process_group(backend=backend, **kwargs)

- def main():

- #### options

- parser = argparse.ArgumentParser()

- parser.add_argument('-opt', type=str, help='Path to option YMAL file.')

- parser.add_argument('--launcher', choices=['none', 'pytorch'], default='none',

- help='job launcher')

- parser.add_argument('--local_rank', type=int, default=0)

- args = parser.parse_args()

- opt = option.parse(args.opt, is_train=True)

- #### distributed training settings

- if args.launcher == 'none': # disabled distributed training

- opt['dist'] = False

- rank = -1

- print('Disabled distributed training.')

- else:

- opt['dist'] = True

- init_dist()

- world_size = torch.distributed.get_world_size()

- rank = torch.distributed.get_rank()

- #### loading resume state if exists

- if opt['path'].get('resume_state', None):

- # distributed resuming: all load into default GPU

- device_id = torch.cuda.current_device()

- resume_state = torch.load(opt['path']['resume_state'],

- map_location=lambda storage, loc: storage.cuda(device_id))

- option.check_resume(opt, resume_state['iter']) # check resume options

- else:

- resume_state = None

- #### mkdir and loggers

- if rank <= 0: # normal training (rank -1) OR distributed training (rank 0)

- if resume_state is None:

- util.mkdir_and_rename(

- opt['path']['experiments_root']) # rename experiment folder if exists

- util.mkdirs((path for key, path in opt['path'].items() if not key == 'experiments_root'

- and 'pretrain_model' not in key and 'resume' not in key))

- # config loggers. Before it, the log will not work

- util.setup_logger('base', opt['path']['log'], 'train_' + opt['name'], level=logging.INFO,

- screen=True, tofile=True)

- util.setup_logger('val', opt['path']['log'], 'val_' + opt['name'], level=logging.INFO,

- screen=True, tofile=True)

- logger = logging.getLogger('base')

- logger.info(option.dict2str(opt))

- # tensorboard logger

- if opt['use_tb_logger'] and 'debug' not in opt['name']:

- version = float(torch.__version__[0:3])

- if version >= 1.1: # PyTorch 1.1

- from torch.utils.tensorboard import SummaryWriter

- else:

- logger.info(

- 'You are using PyTorch {}. Tensorboard will use [tensorboardX]'.format(version))

- from tensorboardX import SummaryWriter

- tb_logger = SummaryWriter(log_dir='../tb_logger/' + opt['name'])

- else:

- util.setup_logger('base', opt['path']['log'], 'train', level=logging.INFO, screen=True)

- logger = logging.getLogger('base')

- # convert to NoneDict, which returns None for missing keys

- opt = option.dict_to_nonedict(opt)

- #### random seed

- seed = opt['train']['manual_seed']

- if seed is None:

- seed = random.randint(1, 10000)

- if rank <= 0:

- logger.info('Random seed: {}'.format(seed))

- util.set_random_seed(seed)

- torch.backends.cudnn.benchmark = True

- # torch.backends.cudnn.deterministic = True

- #### create train and val dataloader

- dataset_ratio = 200 # enlarge the size of each epoch

- for phase, dataset_opt in opt['datasets'].items():

- if phase == 'train':

- train_set = create_dataset(dataset_opt)

- train_size = int(math.ceil(len(train_set) / dataset_opt['batch_size']))

- total_iters = int(opt['train']['niter'])

- total_epochs = int(math.ceil(total_iters / train_size))

- if opt['dist']:

- train_sampler = DistIterSampler(train_set, world_size, rank, dataset_ratio)

- total_epochs = int(math.ceil(total_iters / (train_size * dataset_ratio)))

- else:

- train_sampler = None

- train_loader = create_dataloader(train_set, dataset_opt, opt, train_sampler)

- if rank <= 0:

- logger.info('Number of train images: {:,d}, iters: {:,d}'.format(

- len(train_set), train_size))

- logger.info('Total epochs needed: {:d} for iters {:,d}'.format(

- total_epochs, total_iters))

- elif phase == 'val':

- val_set = create_dataset(dataset_opt)

- val_loader = create_dataloader(val_set, dataset_opt, opt, None)

- if rank <= 0:

- logger.info('Number of val images in [{:s}]: {:d}'.format(

- dataset_opt['name'], len(val_set)))

- else:

- raise NotImplementedError('Phase [{:s}] is not recognized.'.format(phase))

- assert train_loader is not None

- #### create model

- model = create_model(opt)

- #### resume training

- if resume_state:

- logger.info('Resuming training from epoch: {}, iter: {}.'.format(

- resume_state['epoch'], resume_state['iter']))

- start_epoch = resume_state['epoch']

- current_step = resume_state['iter']

- model.resume_training(resume_state) # handle optimizers and schedulers

- else:

- current_step = 0

- start_epoch = 0

- #### training

- logger.info('Start training from epoch: {:d}, iter: {:d}'.format(start_epoch, current_step))

- for epoch in range(start_epoch, total_epochs + 1):

- if opt['dist']:

- train_sampler.set_epoch(epoch)

- for _, train_data in enumerate(train_loader):

- current_step += 1

- if current_step > total_iters:

- break

- #### update learning rate

- model.update_learning_rate(current_step, warmup_iter=opt['train']['warmup_iter'])

- #### training

- model.feed_data(train_data)

- model.optimize_parameters(current_step)

- #### log

- if current_step % opt['logger']['print_freq'] == 0:

- logs = model.get_current_log()

- message = '<epoch:{:3d}, iter:{:8,d}, lr:{:.3e}> '.format(

- epoch, current_step, model.get_current_learning_rate())

- for k, v in logs.items():

- message += '{:s}: {:.4e} '.format(k, v)

- # tensorboard logger

- if opt['use_tb_logger'] and 'debug' not in opt['name']:

- if rank <= 0:

- tb_logger.add_scalar(k, v, current_step)

- if rank <= 0:

- logger.info(message)

- # validation

- if current_step % opt['train']['val_freq'] == 0 and rank <= 0:

- avg_psnr = 0.0

- idx = 0

- for val_data in val_loader:

- idx += 1

- img_name = os.path.splitext(os.path.basename(val_data['LQ_path'][0]))[0]

- img_dir = os.path.join(opt['path']['val_images'], img_name)

- util.mkdir(img_dir)

- model.feed_data(val_data)

- model.test()

- visuals = model.get_current_visuals()

- sr_img = util.tensor2img(visuals['SR']) # uint8

- gt_img = util.tensor2img(visuals['GT']) # uint8

- # Save SR images for reference

- save_img_path = os.path.join(img_dir,

- '{:s}_{:d}.png'.format(img_name, current_step))

- util.save_img(sr_img, save_img_path)

- # calculate PSNR

- crop_size = opt['scale']

- gt_img = gt_img / 255.

- sr_img = sr_img / 255.

- cropped_sr_img = sr_img[crop_size:-crop_size, crop_size:-crop_size, :]

- cropped_gt_img = gt_img[crop_size:-crop_size, crop_size:-crop_size, :]

- avg_psnr += util.calculate_psnr(cropped_sr_img * 255, cropped_gt_img * 255)

- avg_psnr = avg_psnr / idx

- # log

- logger.info('# Validation # PSNR: {:.4e}'.format(avg_psnr))

- logger_val = logging.getLogger('val') # validation logger

- logger_val.info('<epoch:{:3d}, iter:{:8,d}> psnr: {:.4e}'.format(

- epoch, current_step, avg_psnr))

- # tensorboard logger

- if opt['use_tb_logger'] and 'debug' not in opt['name']:

- tb_logger.add_scalar('psnr', avg_psnr, current_step)

- #### save models and training states

- if current_step % opt['logger']['save_checkpoint_freq'] == 0:

- if rank <= 0:

- logger.info('Saving models and training states.')

- model.save(current_step)

- model.save_training_state(epoch, current_step)

- if rank <= 0:

- logger.info('Saving the final model.')

- model.save('latest')

- logger.info('End of training.')

- if __name__ == '__main__':

- main()

这是train.py的代码

你的电脑上是没有配置任何的深度学习环境么?比如说cuda cudnn pytorch等环境?

这种代码都不是网上查一个库 下载下来就直接能用的,

(第0步,确定你电脑的gpu还可以,必须是英伟达的显卡,1060起步)

首先你需要先配置一个pytorch环境,网上有很多的教程。

然后跑一个“hello world”程序,测试你的pytorch环境是否安装成功。

之后才是根据你下载的代码进行运行测试,如果你是从github下载的代码,一般会在readme.md中介绍这个代码怎么运行,跟着他的说明,一步一步的再把代码跑起来。

|

-

报错

最佳答案

查看完整内容

你的电脑上是没有配置任何的深度学习环境么?比如说cuda cudnn pytorch等环境?

这种代码都不是网上查一个库 下载下来就直接能用的,

(第0步,确定你电脑的gpu还可以,必须是英伟达的显卡,1060起步)

首先你需要先配置一个pytorch环境,网上有很多的教程。

然后跑一个“hello world”程序,测试你的pytorch环境是否安装成功。

之后才是根据你下载的代码进行运行测试,如果你是从github下载的代码,一般会在readme.md中 ...

|

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)