|

|

5鱼币

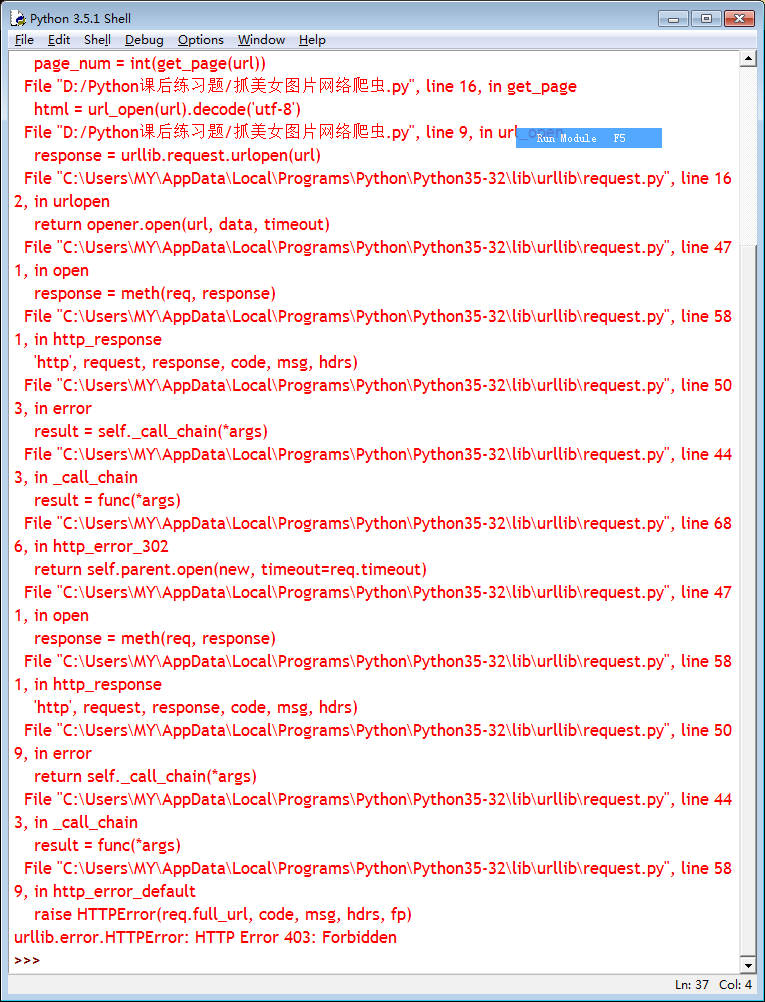

- import urllib.request

- import os

- import random

- def url_open(url):

- req = urllib.request.Request(url)

- req.add_header('User-Agent', 'Mozilla/5.0 (Windows NT 6.3; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/39.0.2171.65 Safari/537.36')

- response = urllib.request.urlopen(url)

- html = response.read()

- print(url)

- return html

- def get_page(url):

- html = url_open(url).decode('utf-8')

- a = teml.find('current-comment-page') + 23

- b = html.find(']', a)

- return html[a:b]

- def find_imgs(url):

- html = url_open(url).decode('utf-8')

- img_addes = []

- a = html.find('img src=')

- while a != -1:

- b = html.find(' .jpg', a, a+255)

- if b != -1:

- img_addrs.append(html[a+9:b+4])

- else:

- b = a + 9

- a = html.find('img src=', b)

- return img_addrs

- def save_imgs(folder, img_addrs):

- for each in img_addrs:

- filename = each.split('/')[-1]

- with open(filename, 'wb') as f:

- img = url_open(each)

- f.write(img)

- def download_mm(folder='OOXX', pages=10):

- os.mkdir(folder)

- os.chdir(folder)

- url = "http://jandan.net/ooxx/"

- page_num = int(get_page(url))

- for i in range(pages):

- page_num -= i

- page_url = url + 'page-' + str(page_num) + '#comments'

- img_addrs = find_imgs(page_url)

- save_imgs(folder, img_addrs)

- if __name__ == '__main__':

- download_mm()

|

-

最佳答案

查看完整内容

听说煎蛋网已经禁止爬虫了。。。

不过你可以试试伪装成浏览器,添加下面的代码,或者更多(小甲鱼老师在55课有讲隐藏)

再有一种你把网址改成www.chunmm.com,不过要根据实际审查元素相应的修改一些代码

|

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)