|

|

马上注册,结交更多好友,享用更多功能^_^

您需要 登录 才可以下载或查看,没有账号?立即注册

x

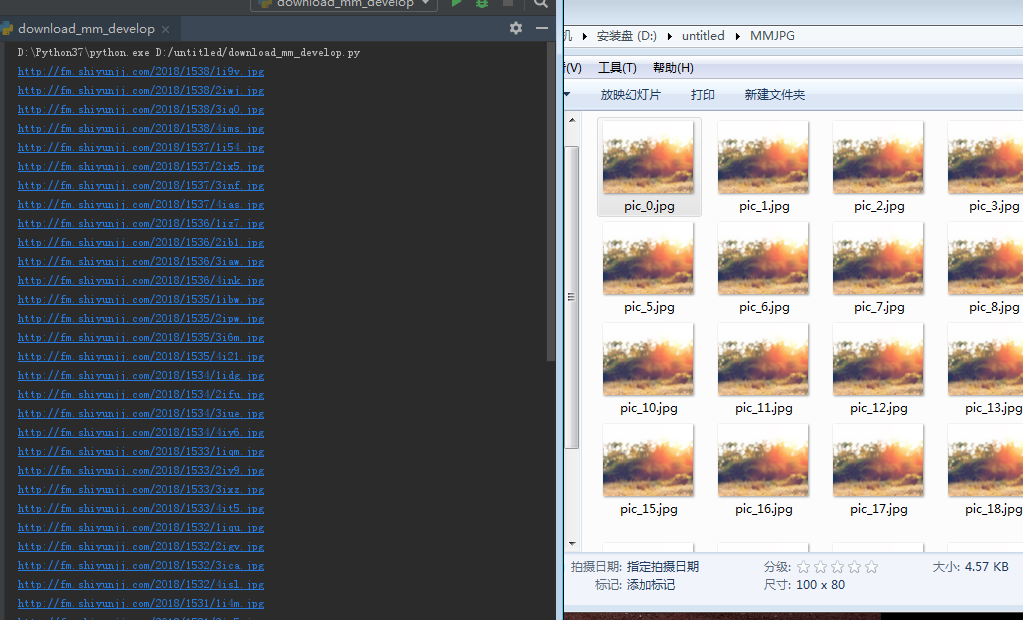

煎蛋网爬不到图片地址,就用http://www.mmjpg.com/,来练手,可是图片地址是爬出来了,可是保存下来都是河蟹图,大佬帮我看看哪里出错了:

代码如下:

import urllib.request

import os

import random

def set_proxy():#设置代理

ip_list = random.choice(['123.7.61.8:53281','42.48.118.106:50038','119.254.94.105:58999','61.138.33.20:808'])

proxy_support = urllib.request.ProxyHandler({'https':random.choice(ip_list)})

opener = urllib.request.build_opener(proxy_support)

urllib.request.install_opener(opener)

def url_open(url):#设置headers打开网页并获得返回内容

set_proxy()

req = urllib.request.Request(url)

req.add_header('User-Agent', 'Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.67 Safari/537.36')

response = urllib.request.urlopen(req)

html = response.read()

return html

def get_address(html, mm_collect):#获取主网页下的子相册网址列表

a = html.find('<div class="main">')

b = html.find('<em class="info">共',a+255)

html = html[a:b]

a = html.find('<span class="title">')

while a != -1:

b = html.find('target',a)

if b != -1:

mm_collect.append(html[a+29:b-2])

else:

b = a+28

a = html.find('<span class="title">', b)

def get_page(html):#获取子每个相册列表拥有页数

a = html.find('没有了')

if a == -1:

a = html.find('上一篇')

b = html.find('全部图片', a)

html = html[a:b]

a = html.find('<i></i>')+15

b = html.find(r'</a>', a)

html = html[a:b]

a = html.find('>')+1

html = int(html[a:b])

return html

def open_mm(mm_collect,pic_address):#打开子相册,生成具体图片的地址列表

for i in mm_collect:

html = url_open(i).decode('utf-8')

page = get_page(html)

a = html.find('<div class="content" id="content">')

b = html.find('.jpg')

html = html[a+74:b+4]

a = html.find('<img src=')+10

html = html[a:b]

pic_address.append(html)

for p in range(2, 5):#page长度过长,自定义已减少运行时长

url = i + r'/' + str(p)

html = url_open(url).decode('utf-8')

a = html.find('<div class="content" id="content">')

b = html.find('.jpg')

html = html[a+74:b+4]

a = html.find('<img src=')+10

html = html[a:b]

pic_address.append(html)

def save_pic(adress):#将具体图片地址列表一一打开保存为图片

num = 0

for i in adress:

print(i)

html = url_open(i)

file_name = 'pic_'+str(num)+'.jpg'

num += 1

with open(file_name, 'wb') as f:

f.write(html)

def download_mm(folder = 'MMJPG', pages = 1):#主程序,文件下载保存

os.mkdir(folder)

os.chdir(folder)

url = 'http://www.mmjpg.com/'

html = url_open(url).decode('utf-8')

pages = int(pages)

mm_collect = []

pic_address = []

get_address(html, mm_collect)

for i in range(2,pages+1):

url = 'http://www.mmjpg.com/' + r'home/' + str(i)

html = url_open(url).decode('utf-8')

get_address(html, mm_collect)

open_mm(mm_collect,pic_address)

save_pic(pic_address)

if __name__ == '__main__':

download_mm()

|

|

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)